Marketing data can exist in different sources, like social networks, third-party websites, and web analytics sites. This applies most commonly to supplies, like in industrial, retail or manufacturing settings. ETL allows for the automatic consolidation of this streaming data into a single location for further analysis. IoT data integrationįor example, a lot of data can be generated on a manufacturing floor from multiple sensors. The high-quality data in data warehouses can significantly accelerate the performance of ML models. With large data sets, you can create accurate machine learning models and perform classification and clustering tasks. Machine learning and artificial intelligence Cloud migrationĮTL processes are used to extract, transform and load data to cloud storage and databases when businesses migrate their systems and data to/from on-premises data centers to cloud environments.

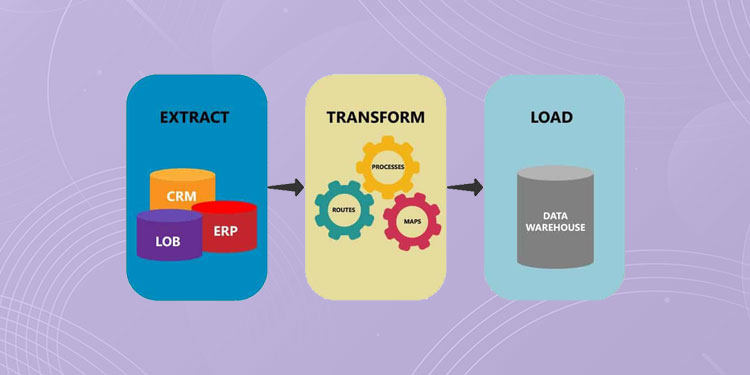

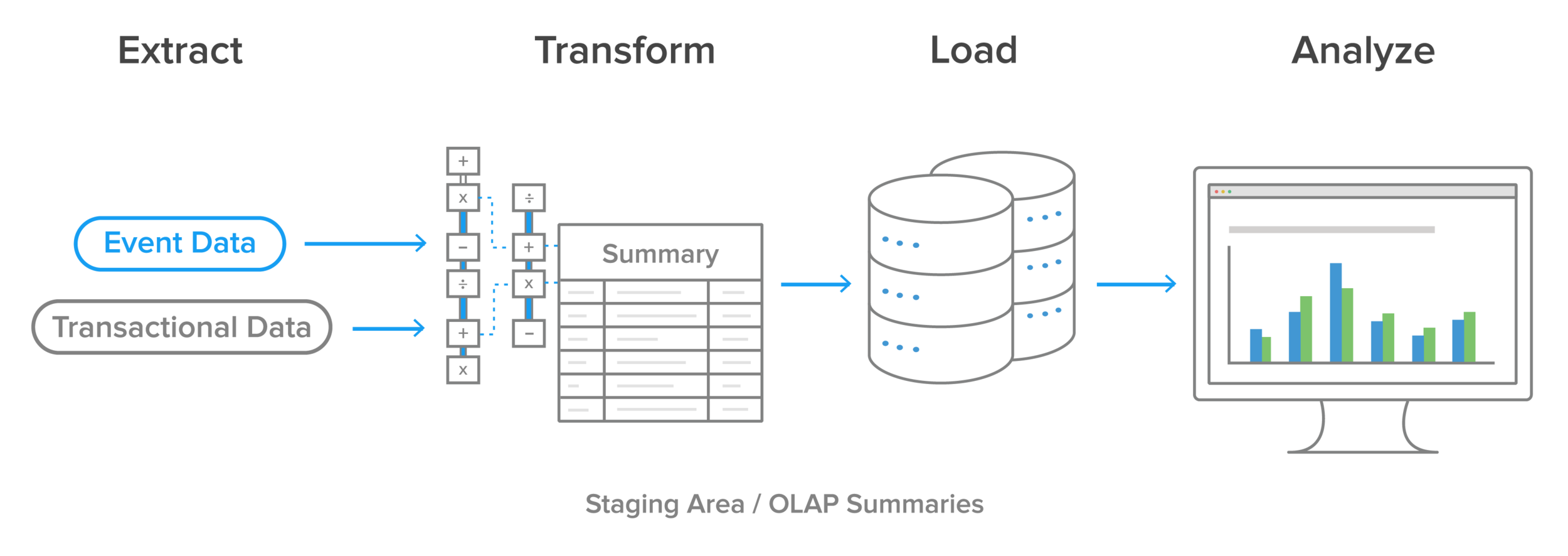

The use of ETL tools has rapidly grown in the past few years for common use cases like: Data warehousing for Business Intelligence (BI)Ĭollect, analyze and organize data for business intelligence tasks like OLAP (online analytical processing), business reporting and creating dashboards from the data. This streams data continuously, with a major caveat: this loading type is suitable for smaller data sets. Loading the data set batch-wise and periodically if it is too large. The ETL system should store the date and time the data was last extracted. Regularly loading only the updated data between the source and target systems. It involves extracting and transforming the data set from the data source to the data warehouse. This loading typically happens during the initial load. The following are methods for data loading: This process is well-defined, continuous and automated, and usually the loading happens batch-wise. Once the data extraction and processing steps are complete, the final process is loading the transformed data into the data warehouse from the staging area. (See how data normalization relates to the Transform step.) Step 3. Data encryption to comply with data privacy laws.Data derivation and creating new values.Data summarization to reduce the size of the data set.Revising data formats to ensure consistencyĪdditionally, you can apply advanced data transformation steps depending on the requirements.Validating and cleaning data to avoid errors.The processing can include the following tasks: During this phase, raw data gets processed in a staging area. The Transform phase involves converting data into a format that allows it to be loaded into consolidated data sources. Here, you extract only the data that has changed. Instead of extracting directly from the data source, the data is extracted to a separate staging area. Connect directly with the data source and extract the data. The Extract step includes validating the data and removing or flagging the invalid data. The source data can be structured, unstructured or semi-structured and in various formats, such as tables, JSON and XML. Enterprise Resource Planning (ERP) systems.Machine data and Internet of Things (IoT) sensors.Customer relationship management (CRM) systems.In this phase, raw data is extracted from multiple sources and stored in a single repository. Today, ETL is being used in all industries, including healthcare, manufacturing and finance, to make better-informed decisions and provide a better service to the end users.ĮTL comprises three steps: Extract, Transform, and Load, and we’ll go into each one. Get a consolidated view of all the data throughout the business.Many organizations now use ETL for various machine learning and big data analytics processes to facilitate business intelligence.īeyond simplifying data access for analysis and additional processing, ETL ensures data consistency and cleanliness across organizations. In its early days, ETL was used primarily for computation and data analysis. ETL is a foundational data management practice. Typically, this single data source is a data warehouse with formatted data suitable for processing to gain analytics insights. This article digs deeper into:ĮTL refers to the three processes of extracting, transforming and loading data collected from multiple sources into a unified and consistent database. ETL serves as the foundation to overcome this challenge. The challenge here is twofold: connecting these inconsistent data sets in multiple formats and leveraging the appropriate technology to derive valuable insights. But you cannot use that data as it’s gathered, primarily due to data inconsistency and varying quality. This data might go on to be used for business intelligence and many other use cases. In any business today, countless data sources generate data, some of it valuable.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed